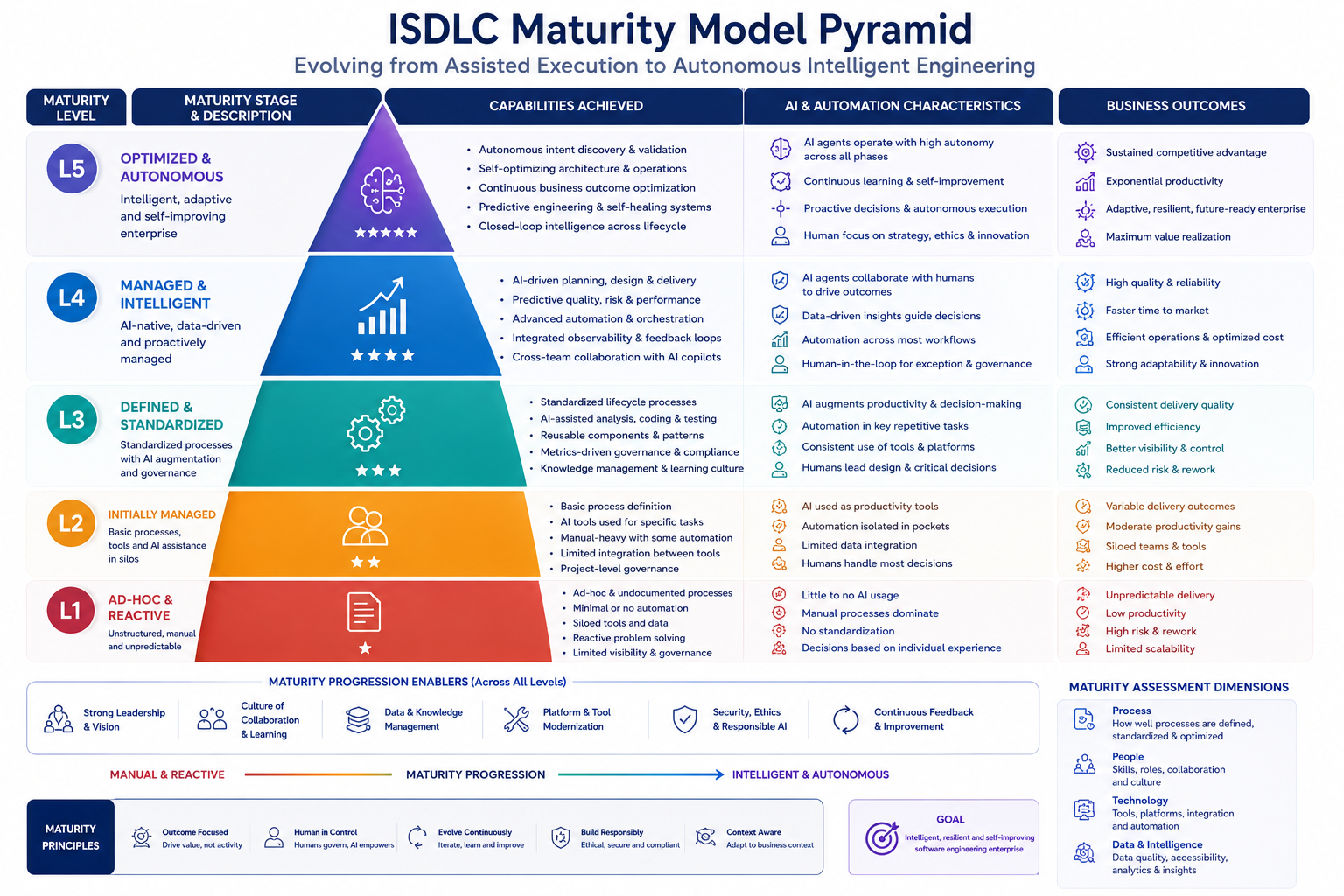

Understanding the Maturity Model

The ISDLC Maturity Model provides a framework for organizations to assess their current AI-augmented development capabilities and chart a path toward higher levels of automation, efficiency, and quality.

Organizations typically evolve through these stages sequentially, though some may skip levels or adopt capabilities unevenly across different teams or projects.

📊 How to Use This Model

Assess your current state honestly against the characteristics and capabilities described for each level. Use the gaps identified to prioritize improvement initiatives. Most organizations start at Level 1 or 2 when beginning their AI-augmented development journey.

Initial / Ad-hoc

Very limited AI use; processes reactive

Characteristics

- AI tools used sporadically by individual developers

- No standardized processes or governance

- AI outputs treated as experimental, ad-hoc

- No formal approval processes for AI-generated code

- Limited or no context management

- Success depends on individual initiative

Typical Capabilities

- A few developers experimenting with GitHub Copilot or ChatGPT

- Occasional use of AI for code completion or documentation

- No tracking of AI tool usage or effectiveness

- Traditional SDLC processes still dominant

🎯 To Advance to Level 2

- Conduct pilot projects with AI coding assistants

- Document basic guidelines for AI tool usage

- Identify and train AI champions within teams

- Establish initial code review practices for AI-generated code

Defined / Task-level AI

AI used for isolated tasks; emerging standards

Characteristics

- AI assistants piloted for specific tasks (coding, testing, docs)

- Basic documentation of roles and responsibilities

- Initial guidelines for AI usage and review

- Some logging of AI interactions

- Individual teams using AI with local practices

- Manual review processes established

Typical Capabilities

- Standard AI coding tools (Copilot, Amazon Q) deployed to teams

- Code review checklists include AI-generated code checks

- Basic audit logs of AI tool usage

- Initial security scanning integrated into workflows

- Documentation owners identified for each phase

🎯 To Advance to Level 3

- Implement end-to-end CI/CD pipelines with AI integration

- Establish context management system (knowledge base, RAG)

- Create cross-functional AI governance committee

- Deploy consistent AI tools and practices across all teams

- Develop metrics to measure AI effectiveness

Integrated / Project-level AI

AI integrated into development and testing workflows

Characteristics

- End-to-end CI/CD pipelines with AI-powered steps

- Spec-driven development practices adopted

- Persistent context store (vector DB, knowledge repository)

- Automated test generation alongside code

- Standardized workflows across development teams

- Basic metrics tracking AI contribution

Typical Capabilities

- RAG pipelines feeding context to AI agents at each phase

- Automated code and test generation from specifications

- Security scans (SAST/SCA) integrated in all pipelines

- Requirements traceability from intent through deployment

- Human-in-the-loop checkpoints at phase boundaries

- Consistent documentation templates and artifacts

🎯 To Advance to Level 4

- Formalize ISDLC with all five phases and five pillars

- Implement mob elaboration and construction rituals

- Establish comprehensive governance processes and audits

- Deploy advanced monitoring and observability

- Create feedback loops from production to planning

Managed / Lifecycle AI

AI spans multiple phases with continuous monitoring

Characteristics

- Formal ISDLC implementation across all phases

- Defined mob rituals and collaborative AI sessions

- Comprehensive governance with audits and approvals

- Full pillar implementation (Governance, Context, Evaluation, Automation, Observability)

- Metrics-driven decision making with KPI dashboards

- Continuous feedback from production to planning

Typical Capabilities

- AI agents operate across all five phases seamlessly

- Automated governance checks with policy enforcement

- Advanced context engineering (ACE) with memory management

- Real-time quality gates and automated decision points

- Production insights automatically generate improvement backlog

- Comprehensive audit trails for compliance

- Cycle time reduced to hours/days instead of weeks

🎯 To Advance to Level 5

- Enable autonomous AI agents with human oversight

- Implement self-healing and auto-remediation systems

- Deploy predictive analytics for proactive optimization

- Create self-improving feedback loops with ML

- Establish continuous innovation and experimentation culture

Optimized / Autonomous DevOps

Self-improving, predictive systems with minimal intervention

Characteristics

- Self-healing deployments with automatic issue resolution

- Proactive optimization driven by predictive analytics

- Advanced RAG/ACE with sophisticated context management

- Continuous improvement loop fully automated

- AI agents making autonomous decisions within guardrails

- Minimal human intervention required for routine operations

Typical Capabilities

- AI predicts and prevents issues before they occur

- Autonomous code refactoring and optimization

- Self-adjusting architectures based on usage patterns

- Automated capacity planning and resource optimization

- AI-driven innovation suggesting new features and improvements

- Continuous learning from production data to improve AI models

- Near-zero downtime with predictive maintenance

- Humans focus on strategy, innovation, and exception handling

⚠️ Level 5 Considerations

Level 5 represents the aspirational state. Few organizations have achieved this level comprehensively. Key challenges include ensuring proper governance of autonomous systems, maintaining human oversight, and managing the cultural shift to trusting AI-driven decisions.

Self-Assessment Guide

Evaluate your organization across the five ISDLC pillars to determine your maturity level:

| Pillar | Level 1-2 | Level 3 | Level 4-5 |

|---|---|---|---|

| Governance | Ad-hoc reviews, manual approvals, basic logging | Standardized review processes, audit logs, policy checks | Automated governance, comprehensive audits, predictive compliance |

| Context | Local documentation, no knowledge management | Centralized knowledge base, basic RAG pipelines | Advanced ACE, semantic context, self-updating knowledge |

| Evaluation | Manual testing, occasional scans, reactive quality checks | Automated test suites, continuous scanning, quality gates | Predictive quality, AI-driven test generation, proactive validation |

| Automation | Manual builds/deploys, scripts for common tasks | Full CI/CD, IaC, automated pipelines | Autonomous agents, self-healing, predictive automation |

| Observability | Basic monitoring, reactive alerts | Comprehensive telemetry, dashboards, anomaly detection | Predictive monitoring, auto-remediation, self-optimizing systems |

📈 Maturity Evolution Timeline

Typical progression times (with dedicated resources and executive support):

- Level 1 → Level 2: 3-6 months (pilot projects, initial training)

- Level 2 → Level 3: 6-12 months (infrastructure setup, process standardization)

- Level 3 → Level 4: 12-18 months (full ISDLC implementation, cultural transformation)

- Level 4 → Level 5: 18-24+ months (advanced AI, autonomous systems)

These timelines vary significantly based on organization size, existing DevOps maturity, cultural readiness, and available resources.

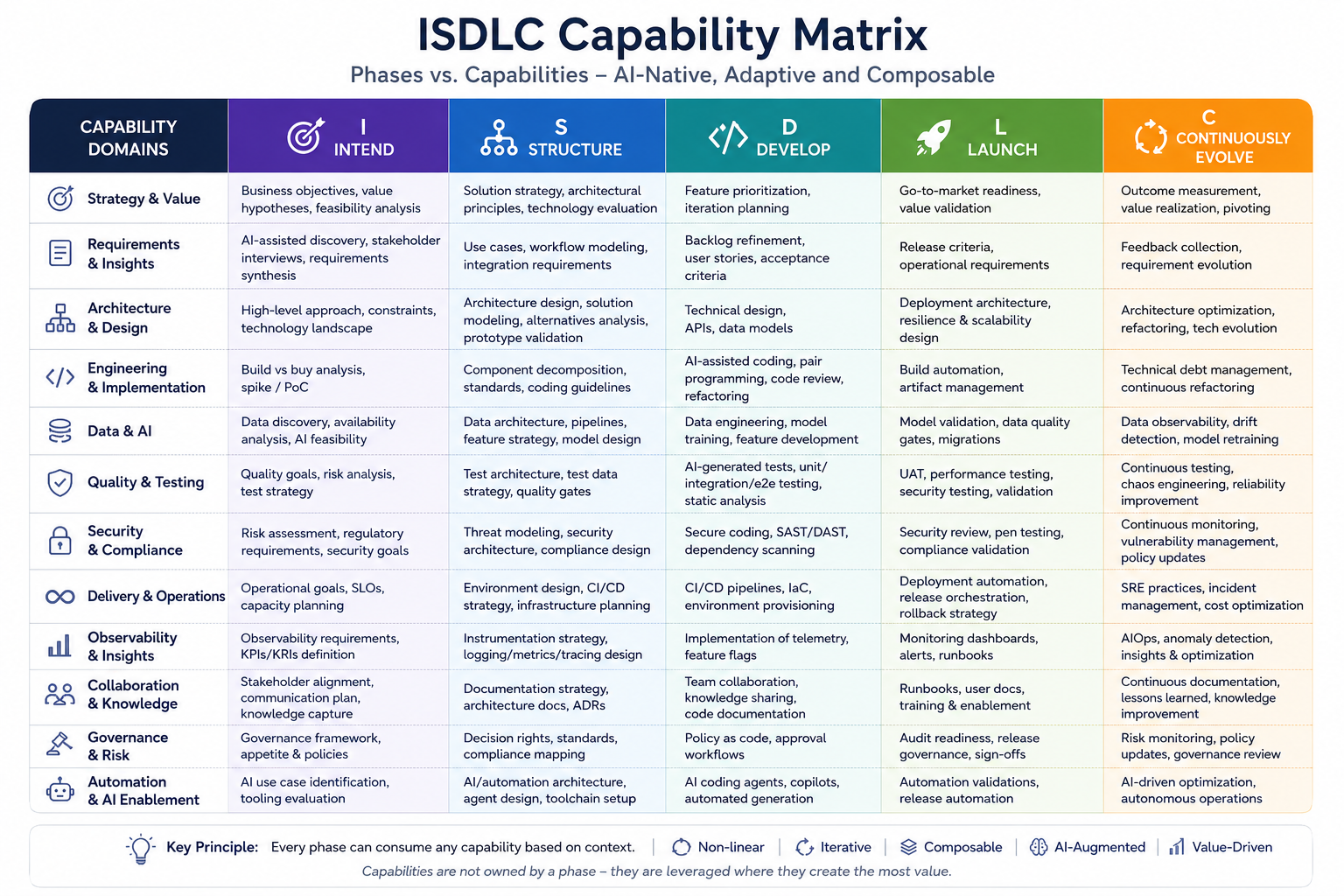

Capability-Based Phase Consumption

Organizations don't need to adopt all phases and pillars at once. ISDLC is composable—select and implement capabilities based on your specific needs and maturity level.

| Phase | Reusable Capability Examples | Adoption Priority |

|---|---|---|

| Intend | AI requirement mining, feasibility analysis, stakeholder alignment | High - Foundation for all downstream work |

| Structure | Architecture modeling, integration strategy, design alternatives | High - Critical for scalable systems |

| Develop | AI coding agents, automated testing, documentation generation | Very High - Immediate productivity gains |

| Launch | Release orchestration, compliance automation, deployment pipelines | High - Reduces deployment risk and time |

| Continuously Evolve | Observability, optimization, modernization, feedback loops | Medium-High - Enables continuous improvement |