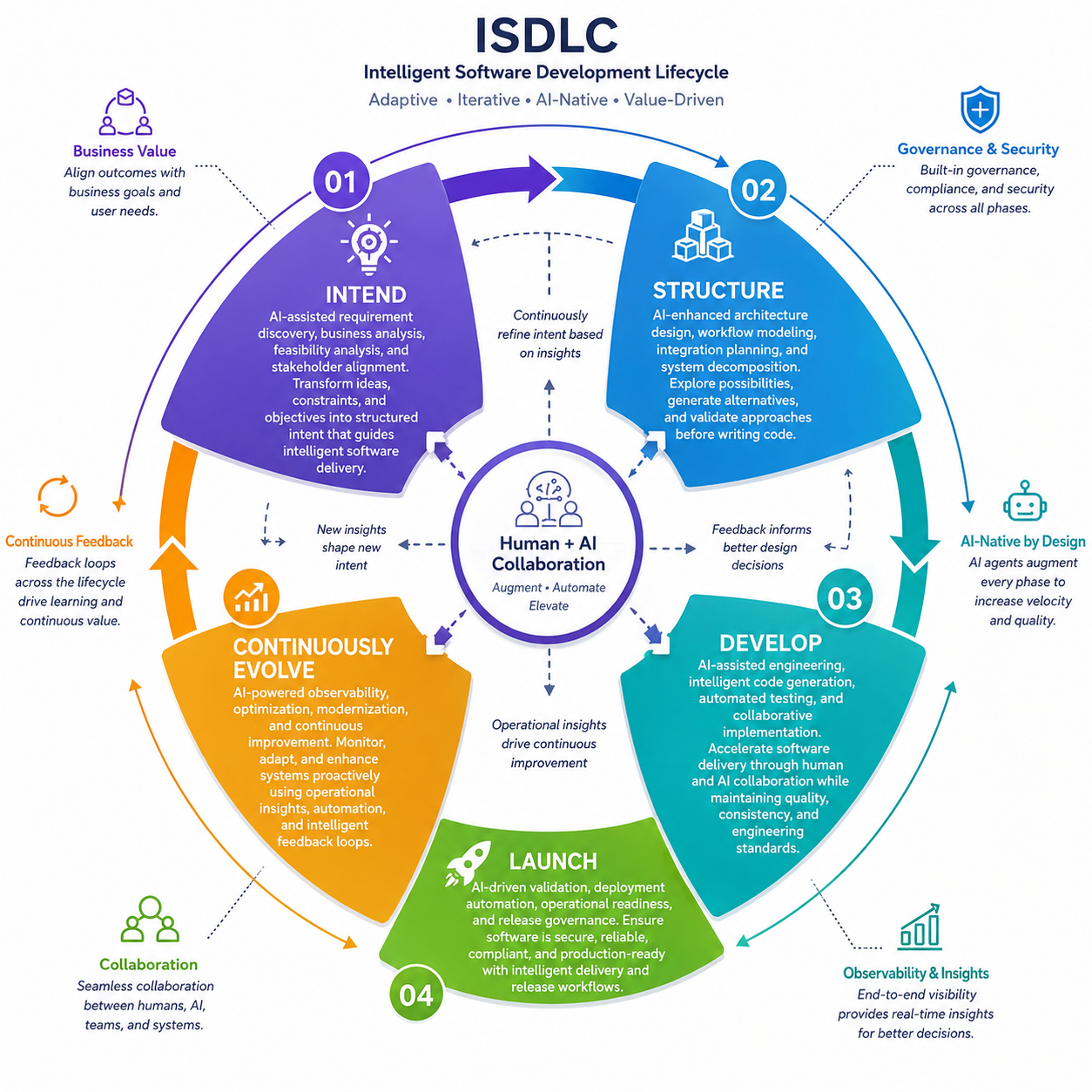

Intend

"AI-assisted requirement discovery, business analysis, feasibility analysis, and stakeholder alignment. Transform ideas, constraints, and objectives into structured intent that guides intelligent software delivery."

What Happens in This Phase

AI tools (LLMs, knowledge graphs) consume business inputs—ideas, user feedback, existing documentation—and propose requirements, user stories, and acceptance criteria. Humans review, refine, and sign off before any design or code begins.

Key Outputs

- Project Charter / Vision: Business objectives, scope, stakeholders, success metrics

- Requirements Specification: Functional and non-functional requirements with acceptance criteria

- Context Glossary: Domain terms, data dictionary, business rules

- Risk & Compliance Register: Identified risks, mitigations, compliance requirements

Roles & Tools

Key Roles

- Product Owner

- Business Analysts

- AI Agents (requirements extraction)

Technologies

- LLMs (ChatGPT, Claude, Q Developer)

- Confluence / JIRA

- Vector databases (RAG)

- Knowledge bases

Success Criteria

- Goals are clear and measurable

- Scope boundaries are defined

- All stakeholders have signed off

- Each requirement has acceptance criteria

- Requirements are traceable to stakeholder needs

💡 Best Practices

- Define security requirements and data classifications upfront

- Establish audit logging for all key decisions

- Use AI to analyze user feedback and market research

- Create reusable requirement templates with AI assistance

- Maintain requirement traceability matrix from day one

Structure

"AI-enhanced architecture design, workflow modeling, integration planning, and system decomposition. Define scalable foundations, operational boundaries, and implementation strategies before development begins."

What Happens in This Phase

AI proposes system and software architecture including component diagrams, data flows, and API schemas. It generates multiple design alternatives and analyzes trade-offs. Humans validate and choose the final architecture and design strategy.

Key Outputs

- Architecture Design Document: Component diagrams, data flows, technology stack decisions

- Solution Blueprint: Integration plans, service interactions, external interfaces

- Interface / API Specifications: Endpoint definitions, data schemas, contracts

- Operational Constraints: SLAs, performance targets, security requirements, failover strategies

Roles & Tools

Key Roles

- Solution Architects

- Development Leads

- AI Agents (design assistance)

Technologies

- Modeling tools (draw.io, Lucidchart)

- LLM-aided design analysis

- API spec generators (OpenAPI)

- Security policy tools

Success Criteria

- All components and responsibilities are defined

- Architecture has been reviewed and vetted

- API specifications are complete and validated

- No major integration gaps identified

- All SLAs and security controls documented

💡 Best Practices

- Perform early threat modeling and architecture reviews

- Use AI to generate multiple architecture alternatives

- Document data flows to identify sensitive areas

- Validate cloud service choices against enterprise standards

- Create architecture decision records (ADRs) for traceability

Develop

"AI-assisted engineering, intelligent code generation, automated testing, and collaborative implementation. Accelerate software delivery through human and AI collaboration while maintaining quality, consistency, and engineering standards."

What Happens in This Phase

AI generates source code, test cases, configuration files (IaC/CI-CD scripts), and documentation from the design spec. Developers review AI-generated code in real time, provide clarifications or corrections, and integrate it into the codebase. This "mob construction" approach yields features in hours, not weeks.

Key Outputs

- Source Code & Documentation: Generated classes, modules, in-line comments, README files

- Unit & Integration Test Suites: Automated test scenarios with high coverage

- CI/CD Pipeline Configuration: Build scripts, deployment automation, environment configs

- Code Review Artifacts: Review comments, security scan results, compliance checks

Roles & Tools

Key Roles

- Developers (reviewers/guides)

- QA Engineers

- AI Agents (code & test generation)

Technologies

- GitHub Copilot / Amazon Q / Claude

- CI tools (Jenkins, GitHub Actions)

- SAST/SCA tools (Snyk, SonarQube)

- Secret scanners

Success Criteria

- Code follows style guide and standards

- Automated tests exist for all requirements

- Code coverage meets or exceeds targets

- All security scans pass

- CI/CD pipelines run successfully

- Human review completed on all AI-generated code

💡 Best Practices

- Adopt "Mob Construction" sessions with AI and team pairing

- Run security scans (SAST/SCA/secrets) on every commit

- Require human sign-off on AI-generated code before merge

- Use AI to generate test cases alongside production code

- Maintain code comments referencing requirement IDs

- Store AI prompts and outputs for traceability

Launch

"AI-driven validation, deployment automation, operational readiness, and release governance. Ensure software is secure, reliable, compliant, and production-ready with intelligent delivery and release workflows."

What Happens in This Phase

AI orchestrates build, containerization, and deployment (e.g., Kubernetes manifests) while auto-running test suites. Automated security scanners validate all artifacts. Humans perform final approvals and validate that production behavior matches expectations before or during roll-out.

Key Outputs

- Release Plan & Checklist: Release schedule, rollback procedures, communication plan

- Deployment Manifests: IaC templates (Terraform, Helm), container configs, infrastructure definitions

- Runbook / Operations Guide: Launch procedures, troubleshooting steps, known issues

- Test Report Summary: Final validation results, defect log, performance metrics vs SLAs

Roles & Tools

Key Roles

- DevOps Engineers

- Site Reliability Engineers

- AI Agents (deployment orchestration)

Technologies

- IaC (Terraform, CloudFormation)

- Kubernetes / container orchestration

- AWS/GCP/Azure deployment services

- Monitoring & observability tools

Success Criteria

- All test phases passed (unit, integration, system)

- No unresolved high-priority defects

- Infrastructure deployed successfully in staging

- Metrics baseline captured

- Runbook entries verified through drills

- All approvals recorded and audit trail complete

💡 Best Practices

- Use canary or blue-green deployments with monitoring

- Implement automated rollback on failure detection

- Validate runtime configurations match security reviews

- Maintain approval workflow for all production changes

- Run IaC scanning and container scanning in pipelines

- Test disaster recovery procedures before go-live

Continuously Evolve

"AI-powered observability, optimization, modernization, and continuous improvement. Monitor, adapt, and enhance systems proactively using operational insights, automation, and intelligent feedback loops."

What Happens in This Phase

After launch, AI continuously monitors production (logs, metrics, user feedback) to detect anomalies and performance regressions. It can propose code fixes or infrastructure optimizations. Humans refine requirements based on real usage. This creates a closed loop: production insights flow back to the Intend phase for the next iteration.

Key Outputs

- Monitoring Dashboards: Live metrics, system health indicators, SLA tracking

- Incident & Postmortem Reports: Timeline, root cause analysis, action items

- Improvement Backlog: Prioritized features, fixes, and optimizations for next cycles

- Architecture Review Log: Documentation of system changes and evolution over time

Roles & Tools

Key Roles

- Site Reliability Engineers

- Operations Teams

- Data Analysts

- AI Agents (auto-remediation)

Technologies

- Monitoring (Prometheus, Datadog)

- APM & logging (ELK, Splunk)

- Alerting (PagerDuty, OpsGenie)

- ML Ops & anomaly detection

Success Criteria

- Dashboards updating in real-time with accurate data

- Alerts configured for all critical thresholds

- Incident response procedures tested and documented

- All incidents have documented root causes and fixes

- Improvement backlog prioritized by business impact

- Feedback loop established back to Intend phase

💡 Best Practices

- Implement AI-driven anomaly detection and alerting

- Use production insights to generate new requirements

- Automate common remediation tasks with AI agents

- Conduct regular architecture reviews and update documentation

- Track system drift and proactively modernize components

- Maintain feedback channels from users to product teams